If you are searching for a way to fully automate your customer service effort, stick with me because I will share with you all our learnings about how we trained ChatGPT to reply to our customers automatically.

Let’s get started!

How to train ChatGPT to reply to your Customer Service?

First of all, we needed a source of truth for teaching ChatGPT how to answer user questions, so we thought about two approaches:

- Use the Knowledge base

- Use the conversations with our support

The first is the most reliable source of truth, the second might be very specific, and it must be better organized to provide actual and useful context to ChatGPT.

Our first try was to ingest all the knowledge base articles into an index and then use the index to find an answer.

The Python code we have used is quite simple.

It has two functions, one to add the knowledge base (from a CSV file) to the index, and the second that loads the index from the local json and asks the question.

It uses the OpenAI API and the llama index with CSV importer to create the index.

from llama_index import GPTSimpleVectorIndex, download_loader

from langchain.agents import initialize_agent, Tool

from langchain.llms import OpenAI

import openai

from pathlib import Path

def addKB():

SimpleCSVReader = download_loader("SimpleCSVReader")

loader = SimpleCSVReader()

documents = loader.load_data(file=Path('./kb.csv'))

index = GPTSimpleVectorIndex(documents)

index.save_to_disk('index.json')

def query(question):

index = GPTSimpleVectorIndex.load_from_disk('index.json')

response = index.query(question)

print(response)First, we ran the addKB() to read from the CSV file, and then we started using the query to see if it’s ready to ready to reply to your customer service.

Is ChatGPT Ready to handle Customer Service yet?

Let’s start checking how ChatGPT would have performed against a human with real cases.

At Customerly, we provide customer support, marketing automation, and customer satisfaction tools for B2B SaaS.

It might be tricky to find the right answer for the customer, so I was pretty curious to see how that would translate to us.

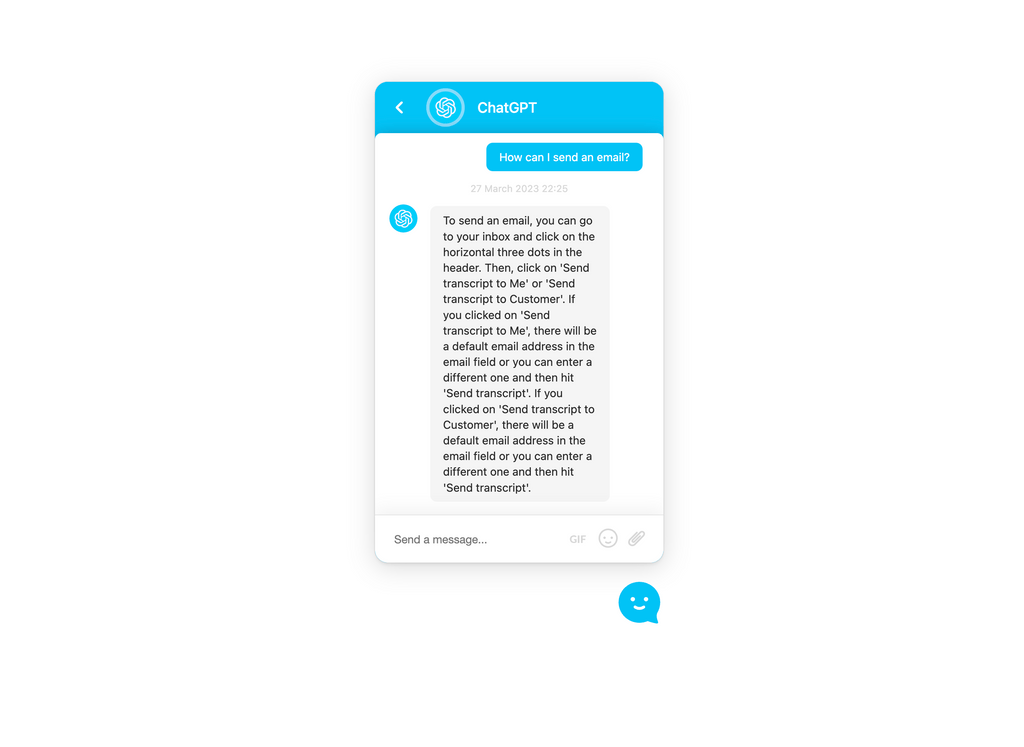

#1 Question: Product related

Q: How can I send an email?

Now a human would ask to clarify since the user might want to send different kinds of emails, which might be an email marketing campaign, an automated one, or an one2one.

In this case, chatGPT took its chances and answered how to send a chat transcript to its customers.

Totally useless. The customer would be unhappy about this reply.

CSAT ☆☆☆☆☆

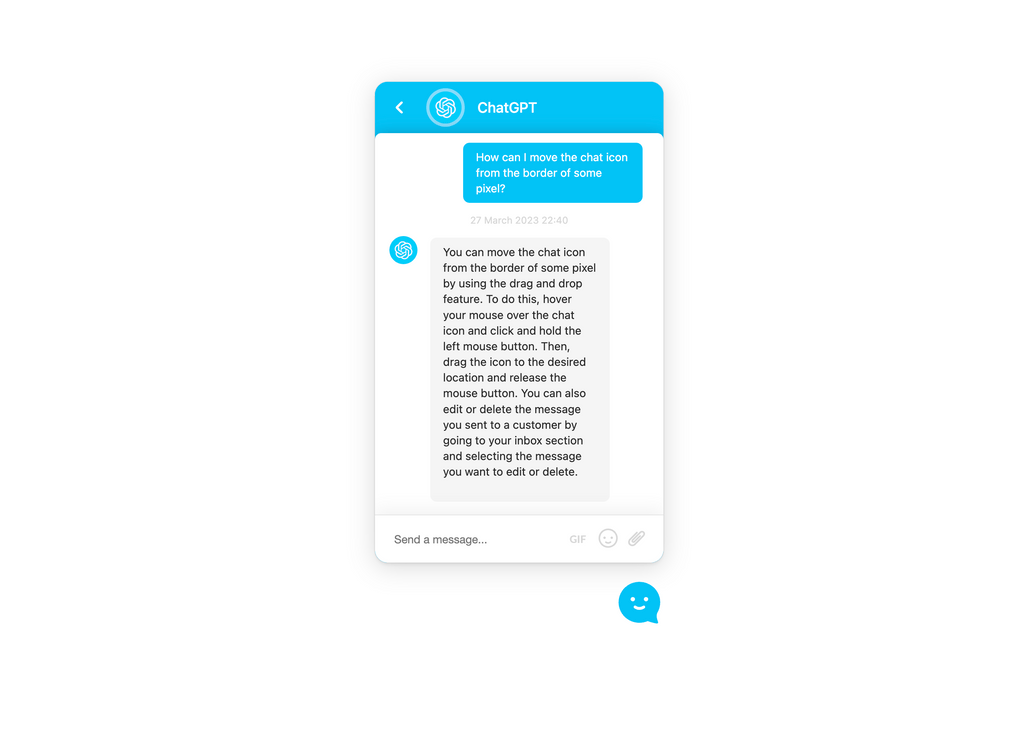

#2 Question: Technical info

Q: How can I move the chat icon from the border of some pixels?

I needed this piece of code for the sake of this article, so I asked ChatGPT how to move the chat icon from the border of some pixels, and the reply was hilarious.

We have a piece of code you can add to your chat implementation to move it from the border of the page. That was not the answer I was expecting 🤣🤌

CSAT ☆☆☆☆☆

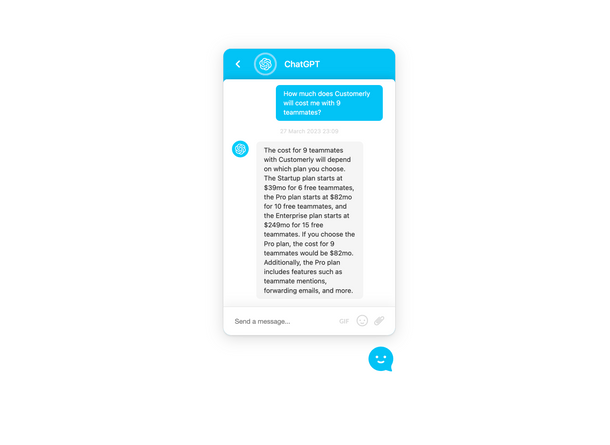

#3 Question: Pricing

Q: How much does Customerly will cost me with 9 teammates?

This is a pre-sales question, so it’s key that it is not pushing possible customers away and reports inaccurate information.

And this time, It was right!

Cost of this interaction is 4310 LLM tokens and 12 embedding tokens.

CSAT ⭐️⭐️⭐️⭐️⭐️

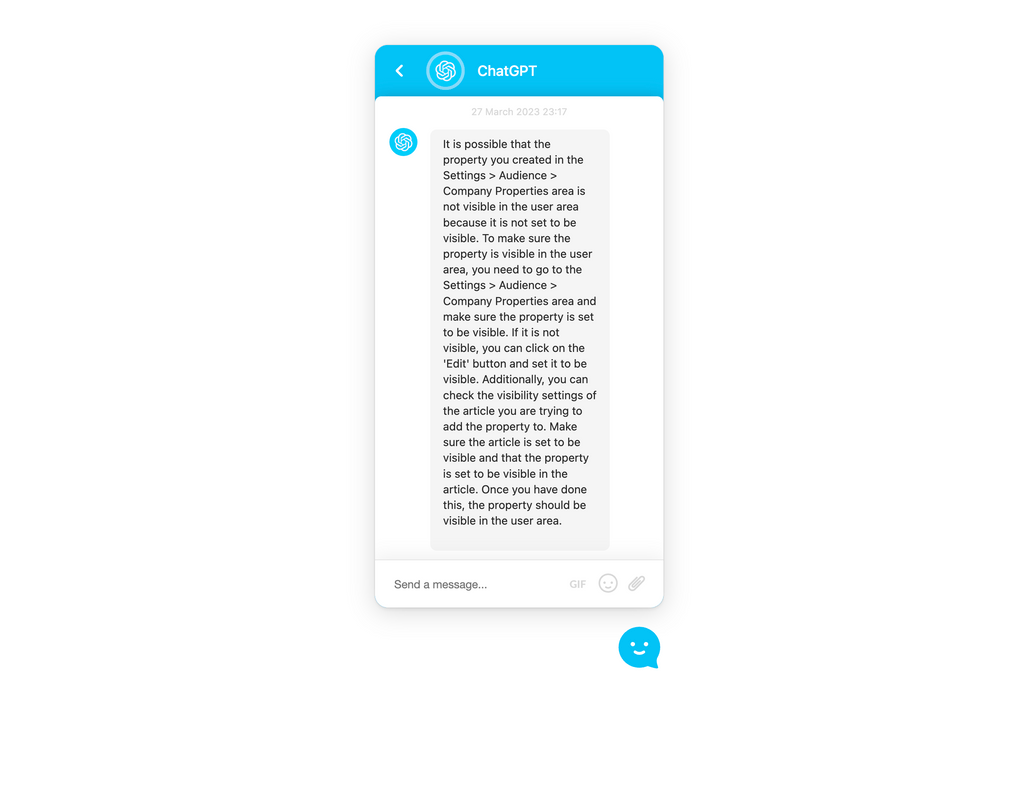

#4 Question: What could be the problem?

This is an actual user reporting an issue with Customerly, and I was curious to see how it would respond.

This is the worst-case scenario with a customer asking for specific help.

This is what the user asked:

Q: “I tried to add a new property to a company from the user’s profile page. When I add it and click on “Create Property,” nothing happens.

First, I assumed the problem was caused by a property with that name already existing (I’ve created it in the Settings > Audience > Company Properties area). Still, before creating it again in the user area, I used the search feature available and couldn’t find the one I’d previously created. What could be the problem? 🤔”

The reply is kinda-related and then completely unrelated.

It does not solve the customer’s issue at all and is giving mixed replies mentioning the status of the property (on a good track) and then mentioning the status of an article (completely off track).

CSAT ☆☆☆☆☆

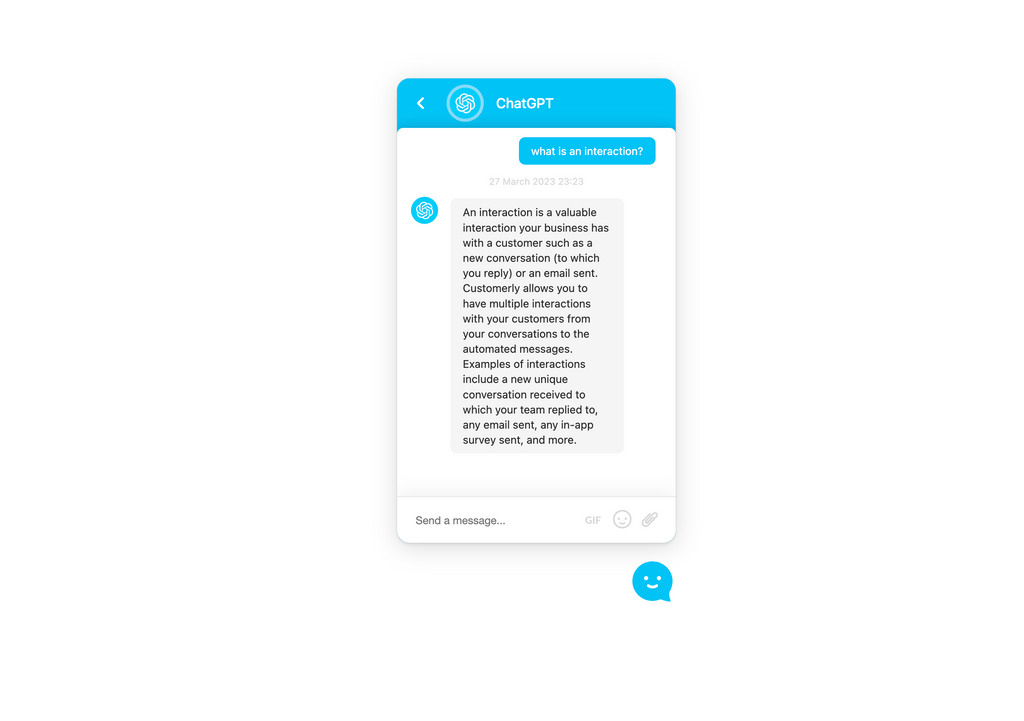

#5 Question: Pricing FAQ

Q: what is an interaction?

This could be a question related to our pricing model based on interaction and was quite easy to reply to.

Well done, ChatGPT.

Cost of this interaction 3701 LLM tokens and 4 embedding tokens.

CSAT ⭐️⭐️⭐️⭐️⭐️

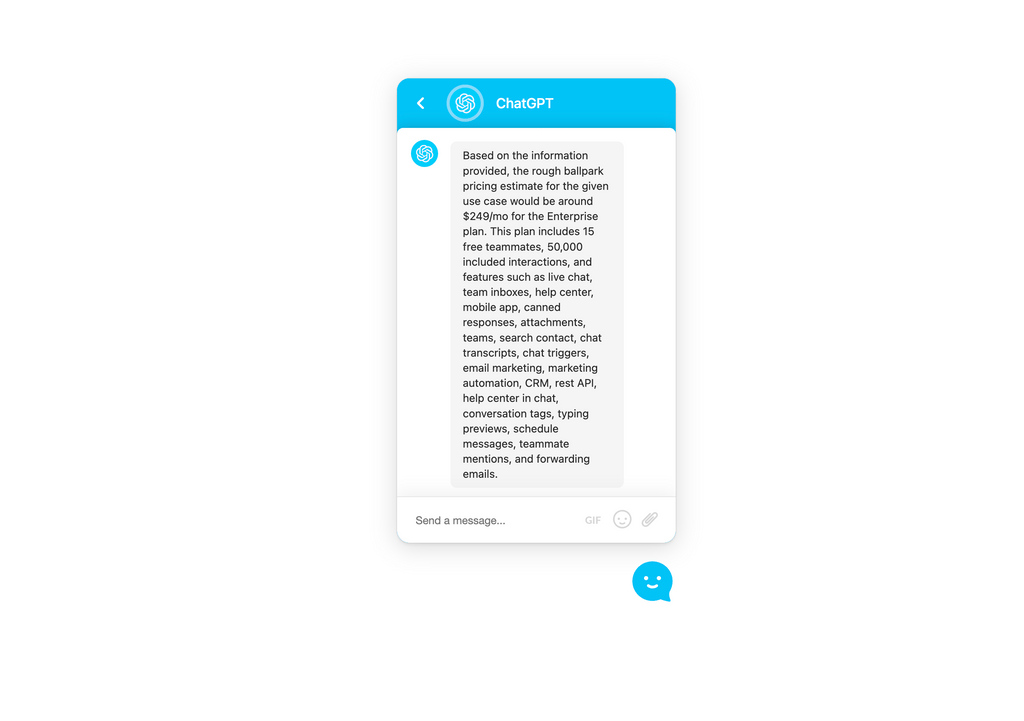

#6 Question: Actual Pre-Sale question!

This was a question we received from a lead, and it was so detailed I wondered how it could reply.

Q: Hey there! I was wondering if you could give me a rough pricing estimate. Our used case:

– 42 agents

– Product tours

– Chat Bot

– Help Centre with 200+ articles for users & visitors

– People reached per month: 11k+I would need a rough ballpark for comparison without big sales call please ;)”

It selected the right Enterprise plan, but it didn’t calculate the additional cost per the exceeding 27 agents; it just shared the cost of $249 with the included agents.

Good, but definitely not the right answer.

CSAT ⭐️⭐️☆☆☆

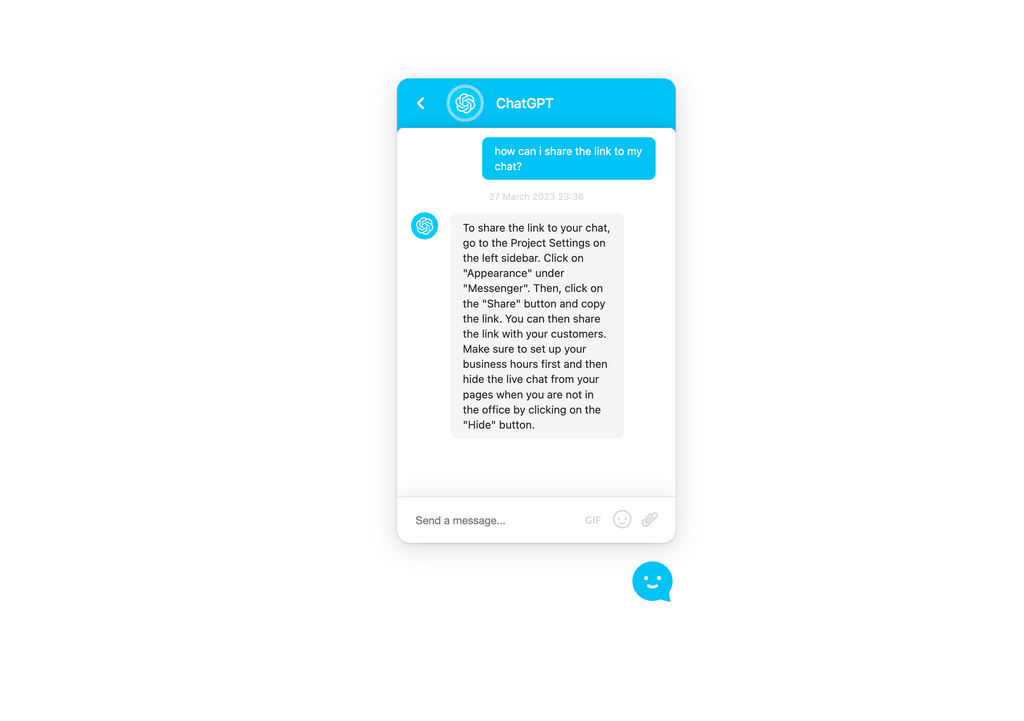

#7 Question: Onboarding Question

The user wanted to share a link with their customers to chat, which is something we described in this article.

The answer is completely unrelated and should have suggested going to the Project Settings, then General Settings.

Again it is mixed stuff and gave wrong information to the customer.

CSAT ☆☆☆☆☆

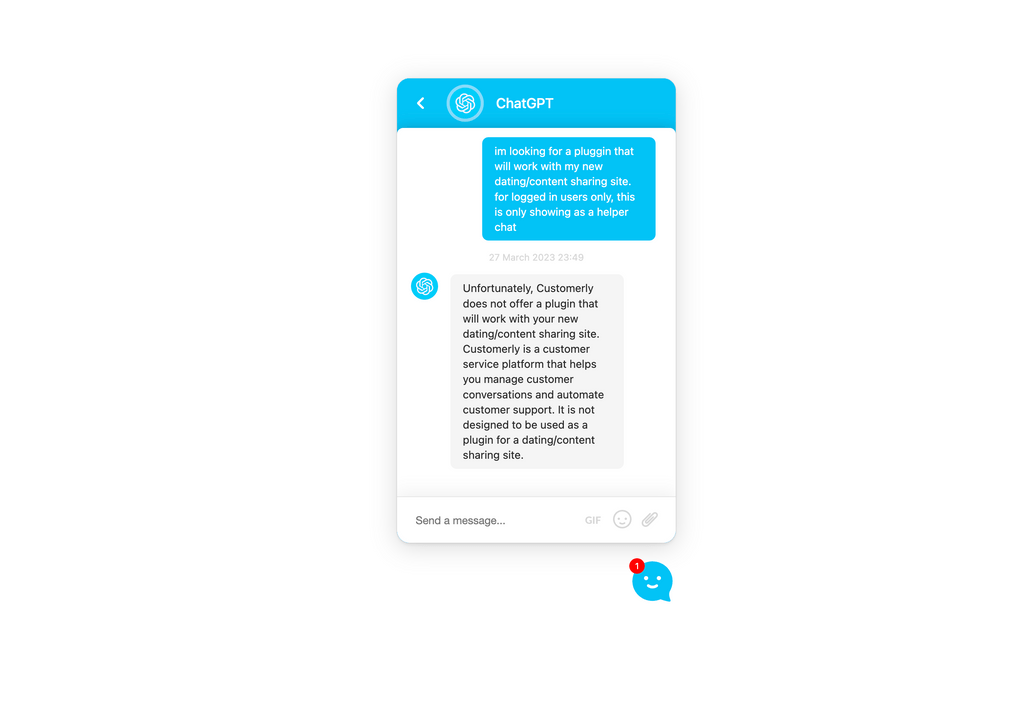

#8 Question: Out-of-scope feature

It’s time to see how it would answer an out-of-scope pre-sales question.

This was the actual question, yes, with the typos 🤪

im looking for a pluggin that will work with my new dating/content sharing site. for logged in users only, this is only showing as a helper chat

I wouldn’t reply this way, but it is accurate enough.

CSAT ⭐️⭐️⭐️☆☆

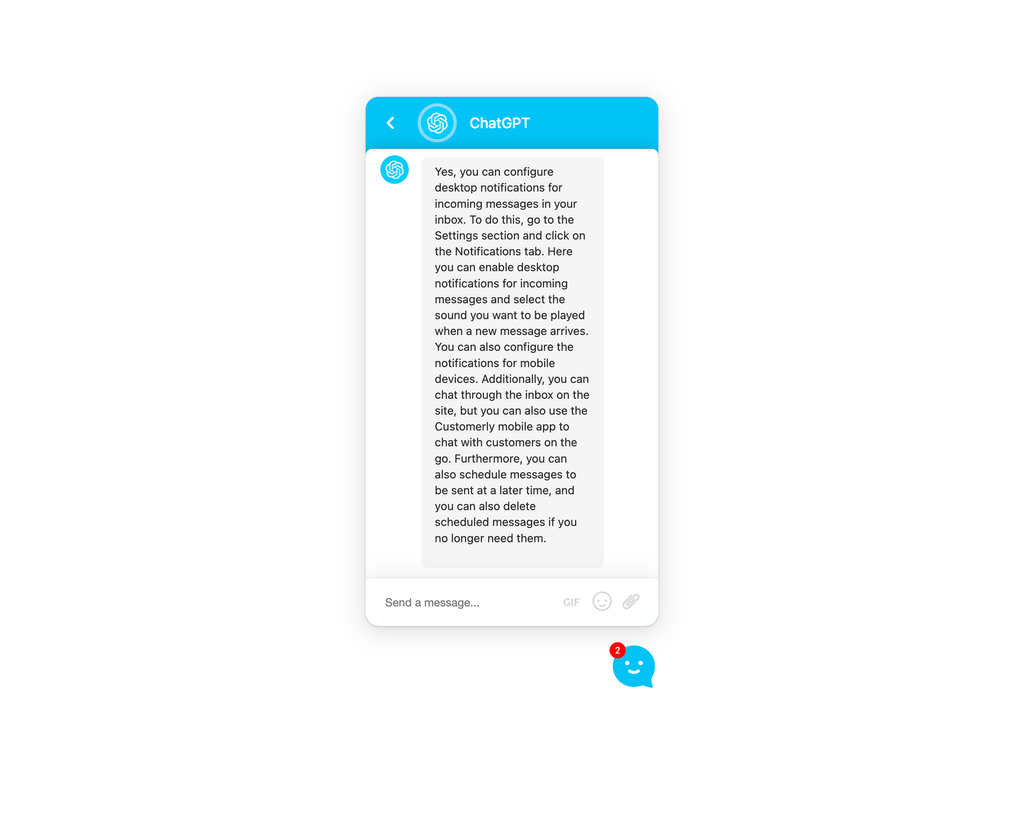

#9 Question: Multiple questions and different alternatives

Q: Hello, I have configured the chat service and it works perfectly, is there a desktop notification option or some other form? Is it only possible to chat through the inbox on the site?

The first answers related to the notifications were good, but then it invented the customization of the sounds??

The second question was answered in a good way, but then it started to add the scheduled messages that were somehow unrelated.

Cost of this interaction 4322 LLM tokens and 37 embedding tokens.

CSAT ⭐️⭐️⭐️⭐️☆

Conclusions

The OpenAI API cost of this procedure, considering the index embedding and the 9 queries, is $1.79.

That is definitely a good cost to handle all these tickets automatically.

But what are you trading here?

Customer Satisfaction.

When a pre-sale question is answered that way, you might have lost thousands of $ a year.

When customers will follow your wrong instructions, how do you think they will feel?

You wasted their time. Their satisfaction is almost lost, and they are now considering going with your competitor.

We believe ChatGPT will shape the future of Customer Support, but we are still far from letting it handle all the support inquiries for you.

In the following weeks, we’ll try to train a complex model based on customer inquiries and agent replies to see if it will improve the performance.

If you want to get on top of your support game, subscribe to our newsletter to get the next ChatGPT article as soon as we publish it.

Now the real question: Would you let ChatGPT answer your customer service for a fraction of the cost?

Tag me on Twitter @ilucamicheli with your thoughts.

To be continued…